When you pick an AI model in 2026 you face a real choice between Gemini 2.5 Pro and Claude Opus 4. Each brings different strengths that can change how you work. You might need help writing code or analyzing video files. This guide walks you through the facts so you can decide which one fits your tasks best. Both models improved quickly after their 2025 launches. Their later versions still show the same core differences today.

You will see no single winner across every test. Instead you get clear trade-offs. Claude often leads in careful coding work while Gemini gives you more value and flexibility with different data types. Read on to learn exactly how these differences affect your projects and budget.

Getting to Know These Frontier AI Models

What Sets Gemini 2.5 Pro Apart

Gemini 2.5 Pro launched on March 25 2025 as Google DeepMind’s most intelligent model yet. It improved reasoning and coding over previous releases. The May 6 I/O edition preview focused on coding web development and video understanding. A stable release followed on June 17 2025. You get a massive context window that accepts up to 1048576 tokens as input. Output can reach around 65000 tokens. The model handles text images audio and video natively and supports strong tool use.

This setup helps you tackle jobs that mix different information types. Early versions had knowledge up to early 2025 but regular updates kept it current. You benefit from fast responses and smooth connections to other Google services. When your work involves reviewing long reports or building apps from examples Gemini makes the process simpler and cheaper.

Claude Opus 4 and Its Updates

Claude Opus 4 arrived around May 22 2025 as Anthropic’s top offering. It earned praise as the best coding model at release. The focus stayed on handling long complex tasks that require hours of steady effort. Updates brought Opus 4.5 in November 2025 and Opus 4.6 in February 2026. These added 1 million token context in beta modes and output up to 128000 tokens. New features included Agent Teams that coordinate several sub-agents on your goals.

Adaptive thinking modes let the model adjust how much effort it spends. You see better computer use and autonomous abilities that make it reliable for detailed work. Developers who test it often note strong prompt following and clean final results. If you run projects that need precision over time this model family delivers consistent quality.

Key Figures Driving Innovation

Demis Hassabis and the Google DeepMind team shaped Gemini with emphasis on scale and multimodal skills. Their work appears in the model’s ability to process many data forms at once. Dario Amodei guides Anthropic with strong focus on alignment and safety. This shows in Claude’s careful behavior during sensitive tasks. You can see their influence when you compare how each model avoids mistakes or stays on topic during long sessions.

These approaches affect which AI feels safer for your important work. One side pushes rapid capability growth while the other stresses responsible use. Both directions benefit you as the models evolve through 2026.

Benchmark Results That Matter to You

Coding and Software Engineering Tests

Coding benchmarks reveal clear patterns. Claude Opus 4 scored 72.5 percent on SWE-Bench Verified while Gemini 2.5 Pro reached 63.2 percent. Later Claude 4.6 versions climbed to roughly 80.8 percent. Claude also showed strength on Terminal-Bench and TAU-bench in early tests. These results matter because they test real software engineering skills like fixing issues in existing codebases.

When you ask an AI to review thousands of lines or suggest architecture changes Claude often produces higher quality output. You spend less time correcting mistakes. Many developers report that its responses feel more thoughtful and closer to what a skilled engineer would write. This edge grows when tasks stretch over multiple steps or require deep understanding of large projects.

Reasoning and Math Performance

Math and reasoning tests show another side of the story. Gemini 2.5 Pro scored 83.0 percent on AIME 2025 compared with Claude’s 75.5 percent in direct matchups. It also posted 83.0 percent on certain GPQA tests against 79.6 percent. These numbers highlight Gemini’s ability to solve structured problems quickly.

Claude narrowed gaps in later releases reaching 91.3 percent on GPQA Diamond with the 4.6 model. Both reached similar ranges of 88 to 91 percent on MMMLU tests. For your work this means you should test both on sample problems from your field. One may click better with the exact style of thinking your projects require.

Multimodal and Specialized Benchmarks

Video and mixed media tests play to Gemini’s strengths. It scored 84.8 percent on Video-MME and showed solid results on MRCR at 93.0 percent plus 88.6 percent on Global-MMLU-Lite. These capabilities let you upload videos and receive accurate summaries or suggestions. Claude led early ARC-AGI v2 tests with 8.6 percent versus 4.9 percent for Gemini. The 4.6 version later achieved 72.7 percent on OSWorld-Verified 65.4 percent on Terminal-Bench 2.0 and 68.8 percent on ARC-AGI-2.

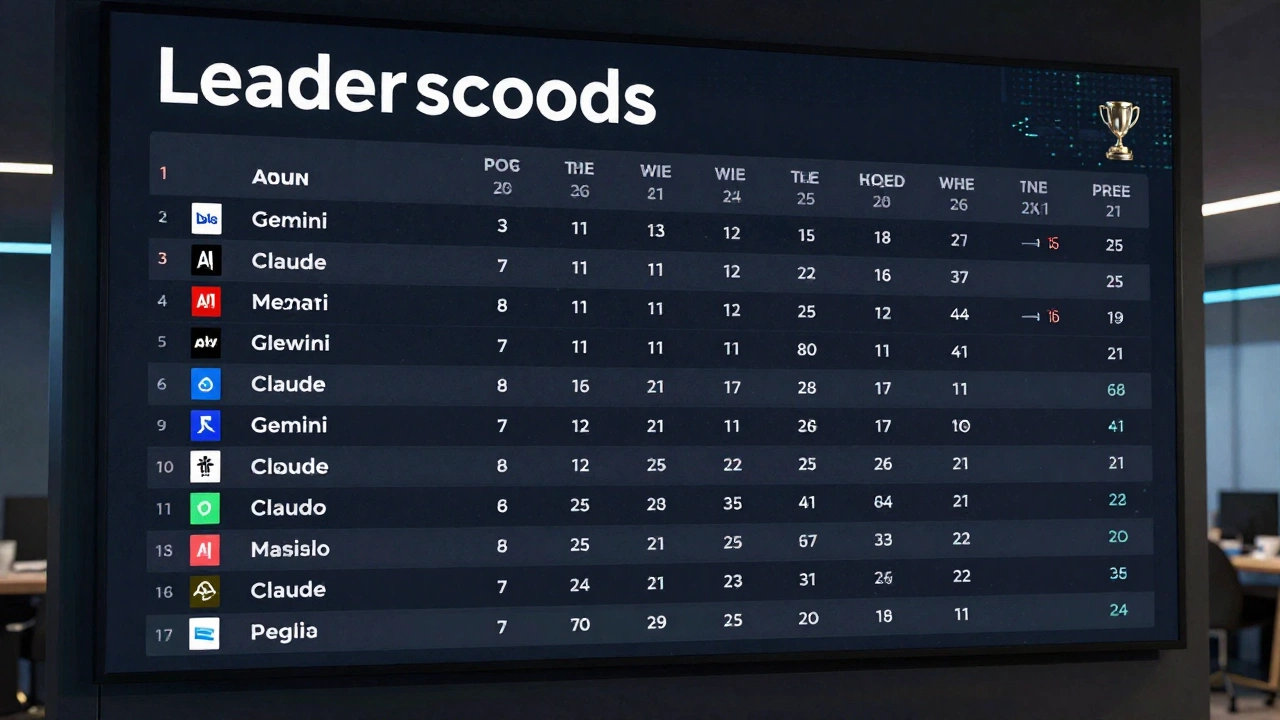

| Benchmark | Gemini 2.5 Pro | Claude Opus 4 Series |

|---|---|---|

| SWE-Bench Verified | 63.2% | 72.5% (80.8% in 4.6) |

| AIME 2025 | 83.0% | 75.5% |

| Video-MME | 84.8% | N/A |

| ARC-AGI v2 | 4.9% | 8.6% |

The table gives you a quick view of early 2025 results. Later leaderboards showed the models trading places depending on the exact test. Always check current arenas because new updates shift rankings fast.

Context Scale Multimodal Power and Speed

Working With Very Large Inputs

Large context windows change what you can ask an AI to do. Gemini 2.5 Pro’s ability to accept more than one million tokens lets you load entire books or massive code repositories in one go. You avoid breaking information into small pieces and losing the big picture. Claude reached similar scale in its 2026 versions but Gemini held an early practical advantage for the biggest jobs.

This feature helps researchers or analysts who must connect ideas spread across hundreds of documents. You get more accurate answers because the model sees everything at once. For software teams it means reviewing full applications without constant copy and paste steps.

Handling Video Audio and Mixed Data

Native multimodal support gives Gemini an edge when your projects include video or sound. You can ask it to describe actions in footage or combine audio with text instructions. This proves useful for building apps that react to visual input or creating content from real world recordings. Claude improved here but Gemini led many video focused evaluations through 2025 and into 2026.

The difference appears clearly when you prototype web tools that need to understand screen recordings. Gemini turns those examples into working code faster in many tests. Your creative or analytical tasks gain speed and accuracy from this built-in skill set.

Speed Integration and Daily Use

Reports often note faster inference times with Gemini across many setups. If you already work inside Google tools the integration feels seamless. You move between documents email and AI assistance without extra steps. Claude performs well on platforms like Amazon Bedrock but the Google ecosystem connection gives Gemini an accessibility boost for many users.

These practical factors affect how often you actually reach for the model during a busy day. Faster and cheaper access means you experiment more and finish projects quicker.

Pricing Value and Real World Practicality

Cost Breakdown You Can Use Today

Price differences stand out sharply. Gemini 2.5 Pro runs at roughly 1.25 dollars per million input tokens and 10 dollars per million output tokens. Claude Opus 4 launched near 15 dollars input and 75 dollars output. Even after some Claude price adjustments to around 5 and 25 dollars in certain tiers the gap stayed large. Analysts described Gemini as seven to twelve times more cost effective for many workloads.

You feel this immediately in high volume work. Budgets stretch further allowing more tests or larger data processing runs. For freelancers or smaller teams the savings remove barriers to regular AI use. The lower cost also encourages longer conversations without watching token counters closely.

Value for Research and Large Scale Tasks

When you analyze big datasets or run repeated experiments Gemini’s price performance ratio shines. You accomplish more without raising spending. This makes it the practical pick for students researchers or anyone doing exploratory work. The model still delivers strong results on reasoning and multimodal tests so you lose little capability while gaining affordability.

Claude’s higher price reflects its focus on precision tasks where quality justifies the cost. Many companies accept the expense for critical development work where errors create expensive problems later. Your own priorities decide which approach fits your situation best.

Ecosystem and Accessibility Factors

Gemini connects naturally with Vertex AI and other Google cloud services. This helps enterprise users who want single vendor simplicity. Claude offers availability through multiple channels including the Anthropic API. Both provide good developer tools but the lower price and speed often make Gemini the default starting point for new projects in 2026.

Consider your existing tools and team skills when choosing. The model that slots into your workflow with least friction usually becomes the one you use most.

Agentic Capabilities and 2026 Trends

Autonomous Agents and Tool Use

Both models advanced tool calling and autonomous features by 2026. Claude 4.6 stood out for reliable terminal and computer interactions plus its Agent Teams system. You can set multiple specialized agents to tackle different parts of a big job together. Gemini leverages video understanding to create working web apps from demonstration clips. Function calling improved in both with fewer errors over time.

These abilities let you automate repetitive work or explore ideas with less manual guidance. The best choice depends on whether you need deep reliability in command line tasks or creative generation across media types.

Long Horizon Reasoning and Adaptive Effort

Adaptive modes that adjust thinking depth became standard. Claude earned praise for sustained performance on tasks that last hours. You trust it with complex debugging or planning that requires consistent focus. Gemini brings parallel strengths in math and large context reasoning that help during broad exploration phases.

Tests on benchmarks like Humanity’s Last Exam and FrontierMath showed gains for both families. Your projects gain from these improvements no matter which model you pick. The key is matching the AI to the exact demands of each job.

Safety Alignment and Responsible Deployment

Anthropic’s focus on alignment appears in Claude’s design. The model aims to reduce harmful suggestions and maintain helpful behavior even in tricky situations. Google emphasizes safe deployment across its products with Gemini. Both release evaluations that check for risks like misaligned actions or security issues.

You benefit from these efforts when using AI for sensitive data or public facing applications. Companies weigh these safety features heavily when selecting models for team wide use in 2026.

Deciding Which AI Wins for Your Work

Best Tasks for Claude Opus 4

Choose Claude when your main needs involve coding software engineering or complex agent workflows. It leads many developer evaluations for output quality and precision. Long running tasks that need focus across many steps play to its strengths. You get excellent results in debugging large systems writing production code or coordinating autonomous agents.

Teams that value careful prompt adherence and clean structured responses often prefer this model family. The higher cost makes sense when quality directly affects your final product or saves expensive engineering hours later.

When Gemini 2.5 Pro Becomes Your Go To

Gemini wins for you when budget versatility or multimodal work matters most. Its strong price performance supports heavy research or data analysis without constant cost worries. Video and audio understanding open new possibilities for content creation or app prototyping. Fast inference and Google tool connections add convenience to daily use.

Students analysts and product teams often start here because they can experiment freely. The large context window handles big picture thinking while keeping expenses reasonable. These advantages make it the versatile everyday option for many users.

Final Advice and What to Watch Next

By April 2026 the models and their successors traded leads on different leaderboards. Claude frequently topped coding and agentic rankings. Gemini excelled in efficiency value reasoning aggregates and multimodal areas. No universal champion exists. Your specific use cases should guide the decision.

Test both models on sample tasks from your workflow. Check live arenas like LMSYS or LLM Stats regularly because previews can change standings overnight. Rapid updates from both Google and Anthropic plus competitors continue pushing capabilities higher. Stay flexible and combine the models when it makes sense. The real winner in 2026 is you when you match the right AI to the job at hand.